The 4th International Competition on Human Identification at a Distance 2023

Overview

Welcome to the 4th International Competition on Human Identification at a Distance (HID 2023)!

The competition focuses on human identification at a distance (HID) in videos. The dataset proposed for the competition will be SUSTech-Competition, which is a new dataset collected in 2022 and released to the scientific community for the first time. It contains 859 subjects. After the success of the previous 3 competitions, we surely believe that the competition will be successful and promote the research on HID.

Awards

Our sponsor, Watrix Technology, will provide 6 awards (19,000 CNY in total, ~2850 USD) to the top 6 teams in the second phase. We are grateful to Watrix Technology to sponsor the competition.

- First Prize (1 team): 10,000 CNY (~1,500 USD)

- Second Prize (2 teams): 3,000 CNY (~450 USD)

- Third Prize (3 teams): 1,000 CNY (~150 USD)

where CNY stands for Chinese Yuan.

Important Dates

The timeline for the competition is as follows.

- Competition starts: February 15, 2023

- Deadline of the 1st phase: April 5, 2023

- Deadline of the 2nd phase: April 15, 2023

- Competition results announcement: May 1, 2023

- Method description submission (only Top 10 teams): May 5, 2023

How to Join This Competition?

The competition is open to everyone. But the members from the teams of the organizers cannot join.

Click CodaLab HID2023 to register and join the competition.

Note: Please use your institutional email to register for the competition, otherwise the registration will not be approved.

If you have any questions please contact Prof. Shiqi Yu<yusq@sustech.edu.cn> and CC to Mr. Jingzhe Ma <majz2020@mail.sustech.edu.cn>.

If you have a WeChat account, you can scan the QR code (ref HID 2024) to join a WeChat group for this competition. It is optional. The notifications sent through emails will also be announced in that WeChat group.

Competition Sample Code

You should develop all the source code you need for the competition. You can use OpenGait to help you start your training quickly. There are some well-trained gait recognition models in the OpenGait repo.

The HID 2023 code tutorials for OpenGait can be click here.

How to Achieve a Good Result

The technologies used in the previous competition can be found in the competition summary paper https://ieeexplore.ieee.org/abstract/document/10007993

S. Yu, Y. Huang, L. Wang, Y. Makihara, S. Wang, M. A. R. Ahad, and M. Nixon. “HID 2022: The 3rd International Competition on Human Identification at a Distance,” 2022 IEEE International Joint Conference on Biometrics (IJCB), Abu Dhabi, United Arab Emirates, 2022, pp. 1-9, doi: 10.1109/IJCB54206.2022.10007993.

@INPROCEEDINGS{hid2022,

author={Yu, Shiqi and Huang, Yongzhen and Wang, Liang and Makihara, Yasushi and Wang, Shengjin and Rahman Ahad, Md Atiqur and Nixon, Mark},

booktitle={2022 IEEE International Joint Conference on Biometrics (IJCB)},

title={HID 2022: The 3rd International Competition on Human Identification at a Distance},

year={2022},

doi={10.1109/IJCB54206.2022.10007993}}Dataset and Evaluation Protocol

Dataset(New for HID 2023)

Test gallery data set and probe data set download options are provided below:

- Baidu Netdisk

- Test gallery (password: HID4)

- Test probe (phase 1) (password: HID4)

- Test probe (phase 2) <password: hid4>

- Google Drive

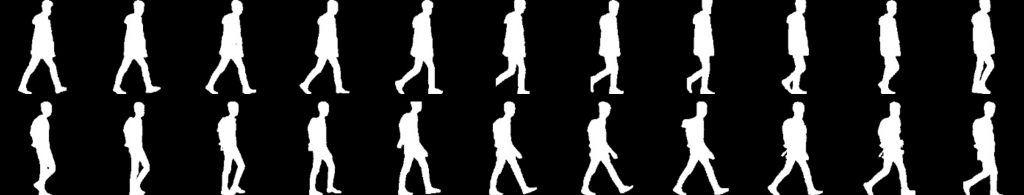

Since the accuracy in the previous competition is close to saturation, we will use a new dataset, SUSTech-Competition, for HID 2023. SUSTech-Competition was collected in the summer of 2022, under the approval of the Southern University of Science and Technology Institutional Review Board. It contains 859 subjects and many variations including clothing, carrying conditions, and view angles have been considered during the data collection. To reduce participants’ burden on data pre-processing, we provide human body silhouettes in the competition. The silhouettes are obtained from the original videos by a deep person detector and a segmentation model provided by our sponsor, Watrix Technology.

All silhouette images have been resized to a fixed size 128 x 128. We will not remove bad-quality silhouettes manually. Some noises in real applications are involved. The noises make the competition more challenging. It also makes the competition a good simulation of real applications.

Different from the previous competition, we will NOT provide a training set to participants in HID 2023. The participants can use any dataset, such as CASIA-B, OUMVLP, CASIA-E, and/or their own dataset, to train their algorithms. The cross-domain challenge should be considered for achieving good results. In the gallery set, there is only one sequence for each subject. The labels of the sequences in the gallery set are provided. The probe set contains 5 randomly selected sequences for each subject. In the first phase, only 10 % of the probe set will be released to participants to avoid label hacking. The remaining 90 % will be released in the second phase.

Evaluation protocol

The evaluation should be user-friendly and convenient for participants. It should also be fair and safe to be hacked. We designed detailed rules as follows:

- To avoid the ID labels of the probe set being hacked by numerous submissions, we will limit the number of submissions each day to 2. Only one CodaLab ID is allowed for a team.

- The accuracy will be evaluated automatically at CodaLab. The ranking will be updated on the scoreboard accordingly.

- There will be about 50 days in the first phase. But only 10% of the probe samples will be taken for evaluation in the first phase.

- There will be only 10 days in the second phase. The remaining 90% of the probe sample is for evaluation. The data is different from that in the first phase.

- The top 10 teams in the final scoreboard need to send their programs to the organizers. The programs are being run to reproduce their results. The reproduced results should be consistent with the results shown in the CodaLab scoreboard.

Ethics

Privacy and human ethics are our concerns for the competition. Technologies should improve human life and not violate human rights.

The dataset SUSTech-Competition for the competition was collected by the Southern University of Science and Technology, China in 2022. The data collection and usage have been approved by the Southern University of Science and Technology Institutional Review Board. All subjects in the dataset signed agreements to acknowledge that the data could be used for research purposes only.

Performance metric

Rank 1 accuracy is for evaluating the methods from different teams. It is straightforward and easy to implement.

where, TP denotes the number of true positives, and N is the number of probe samples.

Committees

Advisory Committee (Alphabetical order)

- Prof. Mark Nixon, University of Southampton, UK

- Prof. Tieniu Tan, Institute of Automation, Chinese Academy of Sciences, China

- Prof. Yasushi Yagi, Osaka University, Japan

Organizing Committee (Alphabetical order)

- Prof. Md. Atiqur Rahman Ahad, University of East London, UK

- Prof. Yongzhen Huang, Beijing Normal University, China; Watrix Technology Co. Ltd, China

- Prof. Yasushi Makihara, Osaka University, Japan

- Prof. Liang Wang, Institute of Automation, Chinese Academy of Sciences, China

- Prof. Shiqi Yu, Southern University of Science and Technology, China

FAQ

Q: Can I use data outside of the training set to train my model?

A: Yes, you can. But you must describe what data you use and how to use it in the description of the method.

Q: How many members can my team have?

A: We do not limit the numbers in your team. But only one participant from each team can submit the result, otherwise, it will be considered an invalid result.

Q: Who cannot participate in the competition?

A: The members of the organizers’ research group cannot participate in the competition. The employees and interns at the sponsor company cannot participate in the competition.

Leaderboard

https://codalab.lisn.upsaclay.fr/competitions/10568#results